Refrigerant leak detection: IR vs SEMICONDUCTOR SENSORS

When it comes to refrigerant leak detection, the predominant sensing technologies are undoubtedly Non-Dispersive InfraRed (NDIR) and Metal-Oxide Semiconductor (MOS), due to their ability to respond to this type of target gases and the many limitations of other common detecting methods such as electrochemical cells and catalytic pellistors. NDIR and MOS technologies differ both in functioning and in price – so what are these differences and which sensor would work better in your application?

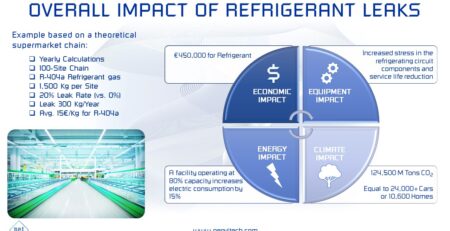

As leak detection is becoming increasingly important by the day in the refrigeration industry for safety, environmental and financial concerns, so is choosing the right technology and ensuring that the respective shortcomings would not prevent your system from performing his task – whether it is protecting workers and property from flammable events, complying with relevant environmental regulations or avoiding loss of precious refrigerant gases, whose prices have skyrocketed in the last few months.

Selectivity and accuracy

NDIR technologies employ light absorption techniques to detect target gases while MOS utilize oxidation/reduction reactions on a metallic oxide substrate. A first important difference is that light absorption in the IR spectrum is very gas-specific, in the sense that absorption properties are different and specific for each gas, while basically any oxidizing or reducing gas would cause reactions on the MOS substrate. This means that IR technology has far greater gas-selectivity and accuracy than MOS technology, the latter suffering from interferences from gases and chemicals other than the target. If you operate in an environment where simultaneous presence of different molecules is expected, this is certainly a factor to be considered in order to avoid false or, worse, undetected alarms. Additionally, as basically all gases have absorption bands in the IR spectrum, infrared technology would allow detection of virtually any type of refrigerant used nowadays, including natural options such as CO2 and hydrocarbons. On the other hand, MOS technology is limited to HFCs, HFOs and NH3.

Minimum Detection Limit (MDL)

A critical difference between the two technologies is the minimum detection limit (MDL). Whereas NDIR normally allows detection in the range of a few ppm, MOS thresholds are around the 100ppm mark. This means that for safety compliance, where alarms are triggered at fractions of the Lower Flammability Limit (LFL) or the toxicity level, equivalent to concentrations generally greater than 1.000 ppm for most refrigerants, a MOS-based detector would be adequate. But if the objective is early leak detection to avoid loss of costly gas, then NDIR is the recommended, if not obvious, choice.

Environmental conditions

Also the impact of environmental temperature and relative humidity should be considered when making an appropriate sensor choice. While the influence of environmental parameters on NDIR detection is minimal, the same cannot be said of MOS technology, although performances vary greatly among different models and measures can be put in place to mitigate this effect. We recommend to include a thorough analysis of environmental performances in the selection process of a MOS sensor for all those applications where temperature and relative humidity conditions differ from the standard testing conditions, as it’s the case with walk-in freezers and cold rooms, machinery rooms and industrial cold storage facilities.

Costs

As far as costs are concerned, we have divided them between initial purchasing cost and maintenance-related costs. Currently available NDIR refrigerant sensor have a cost range between 100-800$. MOS sensing elements are far cheaper, varying from a few dollars to a few tenths of it (to this, it should be added the cost of a layer of electronics to process the low-level signal, which is instead normally included in nowadays, microprocessor-based NDIR sensors). On the other hand, maintenance costs clearly favor IR technology which requires no periodic calibration, due to its intrinsic long-term stability, and has extreme longevity. MOS sensors strictly requires periodic calibration (normally every 6 months or less) and substitution every 2 to 4 years, depending on the type of sensor and application environment.

Conclusions

Summarizing, within the commercial and industrial sector, IR sensors are likely to become the most practical option, assuming that their purchasing cost can be driven down by economies of scale. They are in fact far less susceptible to potential failures in such environments and they possess the type of accuracy and stability needed for high-end applications. MOS sensors could be adequate for those settings, as long as they are clean from interfering and poisoning agents and they are far from thermal extremes. For these very same reasons, residential applications are likely to remain the ideal setting for MOS applications, although the costs and practical aspects of maintenance-related activities can somehow impact its applicability.